Facebook expands fight against COVID-19 vaccine misinformation to include kids

It will remove false claims about the COVID-19 vaccine and its use on children.

Vaccine misinformation has been pervasive issue on Facebook for years, and it wasn’t until earlier this year that the website finally introduced policies that would address the problem. Now, the social network has expanded those policies and its COVID-19 vaccination efforts to include kids shortly after the FDA authorized the emergency use of the Pfizer COVID-19 vaccine for children ages five to eleven.

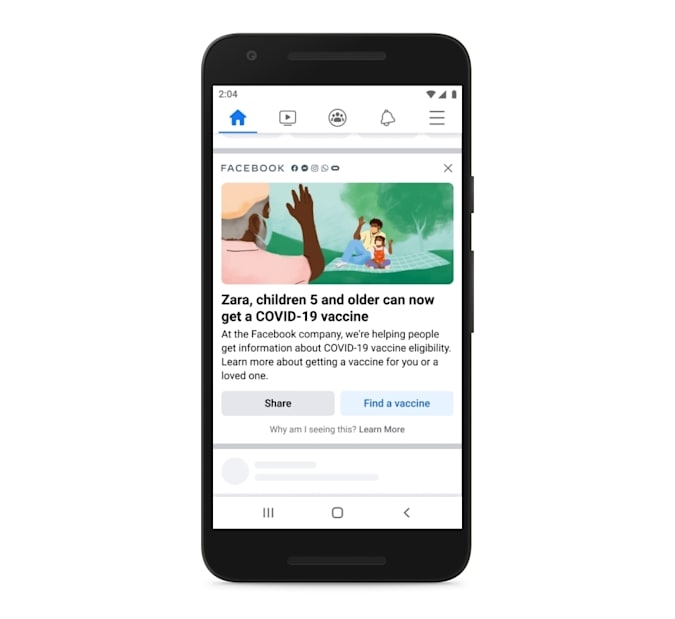

In the coming weeks, it will send in-feed English and Spanish reminders to users in the US that the COVID-19 vaccine is now available for kids. Those reminders will also include a link that’ll help users find the nearest vaccination site. Perhaps, more importantly, it will expand its anti-vaccine misinformation policies to remove claims that COVID-19 vaccines for kids do not exist and that the vaccine for children is untested. It will also remove any claim that COVID-19 vaccines can kill or seriously harm kids, that they’re not effective for children at all and that anything other than a COVID-19 vaccine can inoculate children against the virus.

Facebook says its fight against vaccine misinformation is part of an ongoing effort in partnership with the CDC, WHO and other health authorities. It promises to keep on updating its policies and ban any new claim about the COVID-19 vaccine for children that will emerge in the future. The website, which now operates under its parent company Meta, says it has removed more than 20 million pieces of content from Facebook and Instagram since the beginning of the pandemic. As of August 2021, it has also banned 3,000 accounts, groups and pages for repeatedly breaking its health misinformation policies.

(27)