NVIDIA’s RTX 3000 cards make counting teraflops pointless

Schrodinger’s cuda core.

Teraflops have been a popular way to measure “graphical power” for years. The term refers to the number of calculations a GPU can perform, but while it’s been on spec sheets forever, more recently the teraflop has gone mainstream, appearing in marketing messages found in the launch of consoles like the Xbox Series X. With GPU core counts reaching five figures, it’s nice to have a simple point of comparison. Unfortunately, teraflops have never been less useful.

The term teraflop comes from FLOPs, or “floating-point operations per second,” which simply means “calculations that involve decimal points per seconds.” Tera means trillion, so put together teraflops means “trillion floating-point operations per second.”

The most popular GPU among Steam users today, NVIDIA’s venerable GTX 1060, is capable of performing 4.4 teraflops, the soon-to-be-usurped 2080 Ti can handle around 13.5 and the upcoming Xbox Series X can manage 12. These numbers are calculated by taking the number of shader cores in a chip, multiplying that by the peak clock speed of the card and then multiplying that by the number of instructions per clock. In contrast to many figures we see in the PC space, it’s a fair and transparent calculation, but that doesn’t make it a good measure of gaming performance.

AMD’s RX 580, a 6.17-teraflop GPU from 2017, for example, performs similarly to the RX 5500, a budget 5.2-teraflop card the company launched last year. This sort of “hidden” improvement can be attributed to many factors, from architectural changes to game developers making use of new features, but almost every GPU family arrives with these generational gains. That’s why the Xbox Series X, for example, is expected to outperform the Xbox One X by more than the “12 versus 6 teraflop” figures suggest. (Ditto for the PS5 and the PS4 Pro.)

The point is that, even within the same GPU company, with each year, changes in the ways chips and games are designed make it harder to discern what exactly “a teraflop” means to gaming performance. Take an AMD card and an NVIDIA card of any generation and the comparison has even less value.

All of which brings us to the RTX 3000 series. These arrived with some truly shocking specs. The RTX 3070, a $500 card, is listed as having 5,888 cuda (NVIDIA’s name for shader) cores capable of 20 teraflops. And the new $1,500 flagship card, the RTX 3090? 10,496 cores, for 36 teraflops. For context, the RTX 2080 Ti, as of right now the best “consumer” graphics card available, has 4,352 “cuda cores.” NVIDIA, then, has increased the number of cores in its flagship by over 140 percent, and its teraflops capability by over 160 percent.

Well, it has, and it hasn’t.

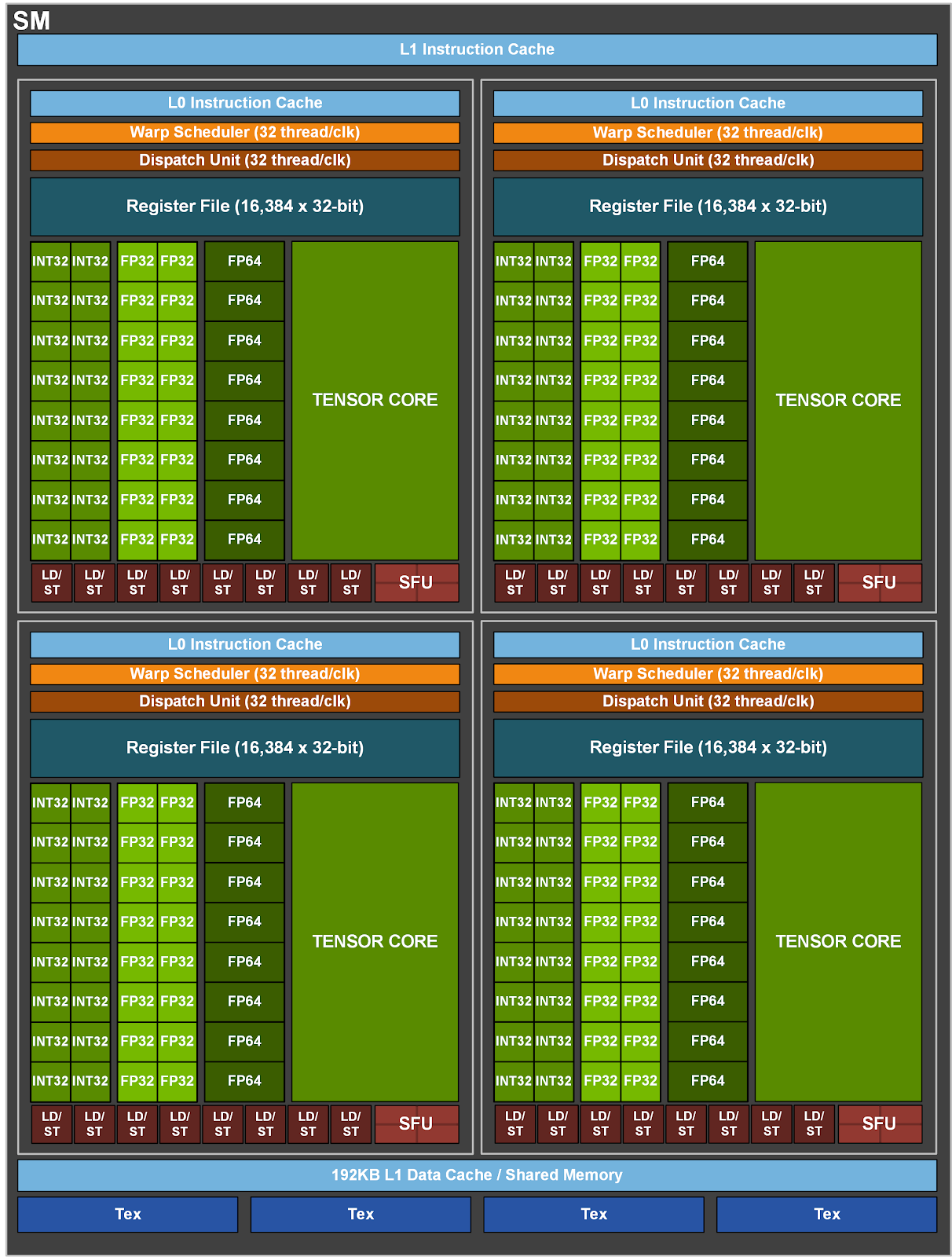

NVIDIA cards are made up of many “streaming multiprocessors,” or SMs. Each of the 2080 Ti’s 68 “Turing” SMs contain, among many other things, 64 “FP32” cuda cores dedicated to floating-point math and 64 “INT32” cores dedicated to integer math (calculations with whole numbers).

The big innovation in the Turing SM, aside from the AI and ray-tracing acceleration, was the ability to execute integer and floating-point math simultaneously. This was a significant change from the prior generation, Pascal, where banks of cores would flip between integer and floating-point on an either-or basis.

The RTX 3000 cards are built on an architecture NVIDIA calls “Ampere,” and its SM, in some ways, takes both the Pascal and the Turing approach. Ampere keeps the 64 FP32 cores as before, but the 64 other cores are now designated as “FP32 and INT32.” So, half the Ampere cores are dedicated to floating-point, but the other half can perform either floating-point or integer math, just like in Pascal.

With this switch, NVIDIA is now counting each SM as containing 128 FP32 cores, rather than the 64 that Turing had. The 3070’s “5,888 cuda cores” are perhaps better described as “2,944 cuda cores, and 2,944 cores that can be cuda.”

As games have become more complex, developers have begun to lean more heavily on integers. An NVIDIA slide from the original 2018 RTX launch suggested that integer math, on average, made up about a quarter of in-game GPU operations.

The downside of the Turing SM is the potential for under-utilization. If, for example, a workload is 25-percent integer math, around a quarter of the GPU’s cores could be sitting around with nothing to do. That’s the thinking behind this new semi-unified core structure, and, on paper, it makes a lot of sense: You can still run integer and floating-point operations simultaneously, but when those integer cores are dormant, they can run floating-point instead.

[This episode of Upscaled was produced before NVIDIA explained the SM changes.]

At NVIDIA’s RTX 3000 launch, CEO Jensen Huang said the RTX 3070 was “more powerful than the RTX 2080 Ti.” Using what we now know about Ampere’s design, integer, floating-point, clock speeds and teraflops, we can see how things might pan out. In that “25-percent integer” workload, 4,416 of those cores could be running FP32 math, with 1,472 handling the necessary INT32.

Coupled with all the other changes Ampere brings, the 3070 could outperform the 2080 Ti by perhaps 10 percent, assuming the game doesn’t mind having 8GB instead of 11GB memory to work with. In the absolute (and highly unlikely) worst-case scenario, where a workload is extremely integer-dependent, it could behave more like the 2080. On the other hand, if a game requires very little integer math, the boost over the 2080 Ti could be enormous.

Guesswork aside, we do have one point of comparison so far: a Digital Foundry video comparing the RTX 3080 to the RTX 2080. DF saw a 70 to 90 percent lift across generations in several games that NVIDIA presented for testing, with the performance gap higher in titles that utilize RTX features like ray tracing. That range gives a glimpse of the sort of variable performance gain we’d expect given the new shared cores. It’ll be interesting to see how a larger suite of games behaves, as NVIDIA is likely to have put its best foot forward with the sanctioned game selection. What you won’t see is the nearly-3x improvement that the jump from the 2080’s teraflop figure to the 3080’s teraflop figure would imply.

With the first RTX 3000 cards arriving in weeks, you can expect reviews to give you a firm idea of Ampere performance soon. Though even now it feels safe to say that Ampere represents a monumental leap forward for PC gaming. The $499 3070 is likely to be trading blows with the current flagship, and the $699 3080 should offer more-than enough performance for those who might previously have opted for the “Ti.” However these cards line up, though, it’s clear that their worth can no longer be represented by a singular figure like teraflops.

(61)