Oops! This MIT robot knows it made a mistake by reading human brainwaves

Scientists at the Massachusetts Institute of Technology have created a robot that can effectively learn to read people’s minds to understand when it’s made a mistake.

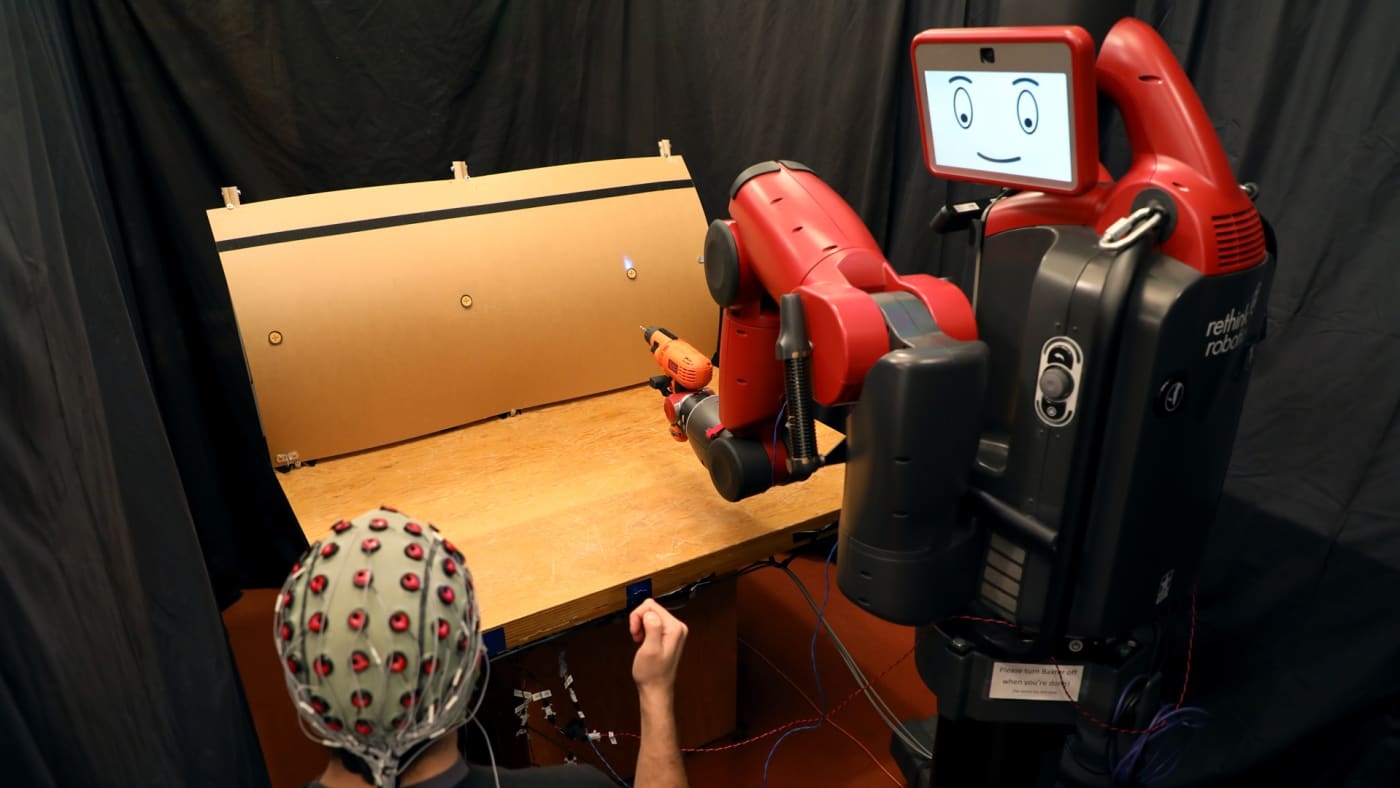

In an experiment, the robot, a Baxter unit from Rethink Robotics, is programmed to reach toward a spot on a mock plane fuselage to simulate an industrial robot drilling holes. A human wearing an electroencephalography cap able to detect brainwaves (and electromyography electrodes that can detect muscle impulses) is told which of three spots on the fuselage the robot is supposed to reach toward.

If the robot reaches toward the wrong spot, a neural network system is designed to detect, through EEG signals, brainwaves corresponding to what’s called “error-related potential,” a brain state that automatically arises when people see a mistake being made. The robot then pauses, allowing the human to use hand gestures, detected by the EMG electrodes, that tell the robot where to move.

“This work combining EEG and EMG feedback enables natural human-robot interactions for a broader set of applications than we’ve been able to do before using only EEG feedback,” said Daniela Rus, director of MIT’s Computer Science and Artificial Intelligence Laboratory and one of the researchers involved in the project, in a statement.

The robot was programmed to initially choose the correct spot 70% of the time, but it was accurate more than 97% of the time when including the human corrections. The robot was even able to detect brain and muscle signals from people who hadn’t worked with it before without needing to update its training between participants.

“What’s great about this approach is that there’s no need to train users to think in a prescribed way,” said Joseph DelPreto, an MIT PhD student and lead author on a paper slated to be presented at the Robotics: Science and Systems conference in Pittsburgh this month. “The machine adapts to you, and not the other way around.”

Teaching robots to understand human nonverbal cues and signals could make them safer and more efficient at working with people, including people with problems speaking or moving around, the researchers say.

Fast Company , Read Full Story

(31)